代写计算机视觉与成像 机器人视觉代写 MATLAB代码代写

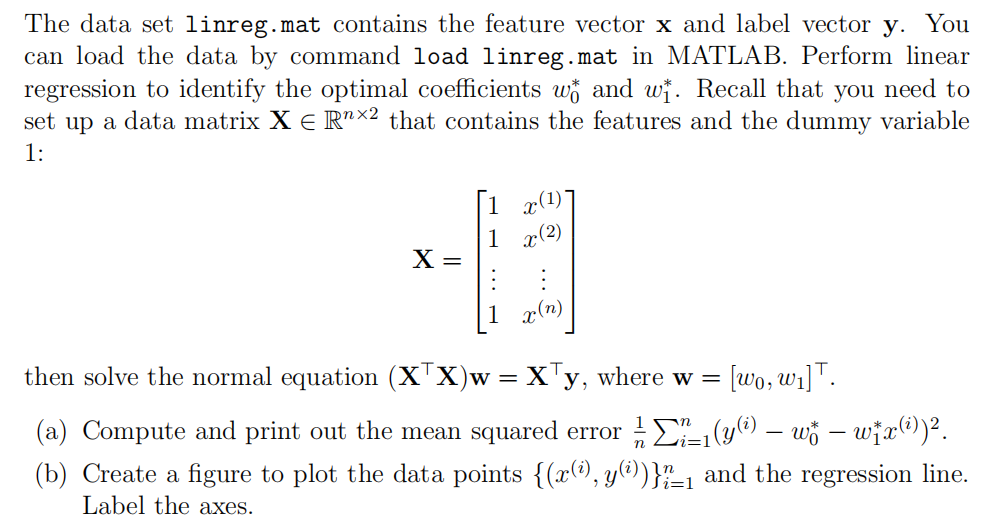

495Computer Vision & Imaging/ Robot Vision - Formative task 代写计算机视觉与成像 This task is formative. For this task, you will need to submit the following files: • code username formativet...

View detailsSearch the whole station

MATLAB作业代写 This assignment is done by MATLAB. Put all your code together in one executable .m file and submit on Blackboard.

• This assignment is done by MATLAB. Put all your code together in one executable .m file and submit on Blackboard.

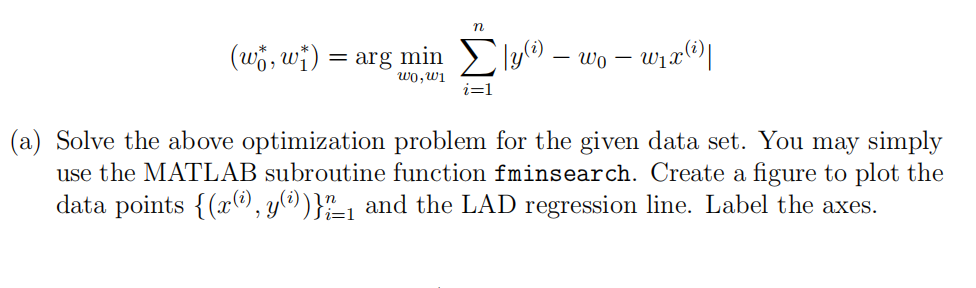

The data set linreg+outlier.mat contains the feature vector x and label vector y, but one of the point is an outlier. In this case, we consider the robust linear regression or the so-called least absolute deviation (LAD) problem

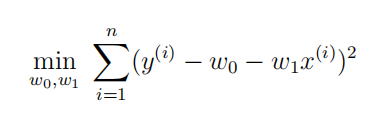

(b) Solve the corresponding least squares (LS) problem:

Plot the obtained LS regression line (in different style and color) in the same figure from part (a).

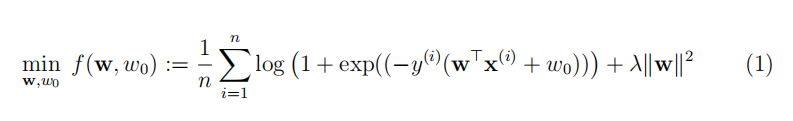

The handwritten digit dataset mnist5k.mat is modified from the original MNIST gray-scale image dataset, where samples of digit 9 belong to class 1 and otherwise class −1. It contains a training set Xtr with labels Ytr and a testing set Xte with labels Yte. There are 5000 sample images in both training and testing sets and each sample is stored a vector of 784 gray-scale pixel values between 0 and 255. We do binary classification using logistic regression:

(a) Visualize the first 9 training images in Xtr using MATLAB built-in function imshow. Dispaly the 9 images as a 3 × 3 tabular in the same figure using subplot. Note that you need to reshape each sample into a 28 × 28 matrix before visualization.

(b) Write a function named as logit.m to implement gradient decent algorithm for the logistic regression problem (1) using a constant step size η. The input arguments of logit include Xtr, Ytr, Xte, Yte, the constant step size η, and the regularization parameter λ. The output of logit should be the training accuracy, test accuracy, and the objective value at each iteration (stored as a vector). You should adopt a proper stopping criterion for gradient decent implementation. Call your function logit.m by choosing proper values of η and λ. Note that your main file is supposed to be separated from logit.m.

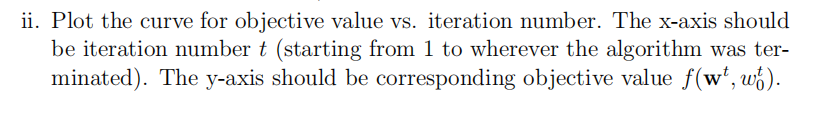

For this part, you need to:

i. Print out the final training accuracy and test accuracy (A reasonable test accuracy should be > 94%)

In this problem, we use PCA to reduce the dimension of raw face images. Load the data face.mat, and we will have the variable X which is the data matrix of size 400 × 10304, where each row vector represents a gray-scale image originally of 112 × 92 pixels.

First of all, center the data points (i.e., row vectors) in X by subtracting their mean mu from each row. Denote the preprocessed data matrix by variable X_0. Apply PCA to X_0 and reduce data’ s dimension to k = 350. You can use the following command (taking the i-th image as an example) to recover the image:

Recon = X_0(i,:)∗V_k∗V_k’ + mu,

where V_k= V(:,1:k) contains the first k principal components. Recall the set of principal components can be computed via built-in function svd for SVD. Remember to reshape the vector Recon into a 112 × 92 matrix to show the image.

Pick any image from X, and show the effect of PCA by comparing the original image and the recovered images side by side using subplot. Give a title to each subfigure.

更多代写:Java代写网课代上 GMAT代考 英国化学Chemistry代写 加急Essay价格 海外论文代写 数学网课代修

合作平台:essay代写 论文代写 写手招聘 英国留学生代写

Computer Vision & Imaging/ Robot Vision - Formative task 代写计算机视觉与成像 This task is formative. For this task, you will need to submit the following files: • code username formativet...

View detailsMA1607 | Project 2 数学算法代写 1 Project background This project builds upon the work done from week 11, where we learned the mathematics underlying the Google PageRank algorithm. 1 Projec...

View detailsUrbanism Assignment #3 – Reconceiving the public realm 都市主义代写 Throughout the semester we have been studying various urban types and design proposals that have emerged as the result of ...

View detailsMAT 170 FINAL PROJECT 数据分析工具代写 All Final Projects need to be done individually. No teams are allowed. We will refer all suspected acts of collaboration to student judicial All Final ...

View details